Design guidelines for human-AI interaction

To keep up with our ever-changing times, knowing how to integrate artificial intelligence into the newest, shiniest products with these 18 best practices might well give you a competitive edge. But first: can thoughtful design fuel trust in AI?

The role of artificial intelligence (AI) has evolved with mind-boggling speed, allowing it to become a proactive agent rather than a low-key worker. Now, digital assistants keep showing up in many different aspects of our lives, endowing tech with some bonus, smart convenience.

Powered by machine learning models, it analyses data to calculate statistical probabilities that can then be used to make predictions, fuel recommendations, and help with decision-making.

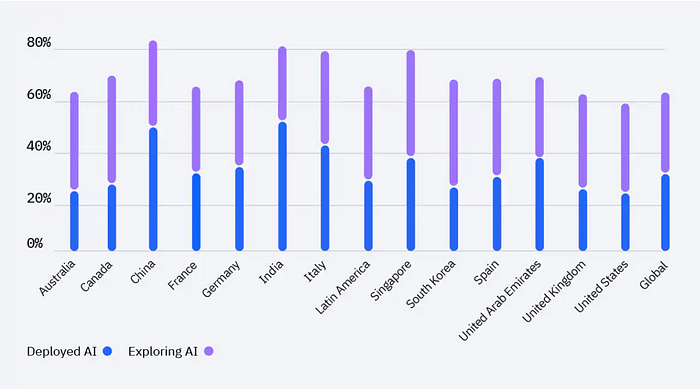

Today, one in three companies are using AI in their business, and another 42 percent are exploring it as an option. Advancements have made it more accessible, but other factors such as competitive and environmental pressure, the promise of cost reduction, and consumer expectations also serve as a push towards embracing machine intelligence.

Additional benefits of using AI range from reducing human error, to automating tasks and gathering greater business insight. 61 percent of employees also claim that AI boosts their productivity.

Whether your invisible digital assistant is suggesting your next playlist, purchase, or route home, it is truly everywhere, shaping experiences and outcomes on a routine basis.

Still, as much as we might love the idea of personalising services with data and clever algorithms, the appeal of pure comfort might not be enough for a wider AI-adoption amongst consumers. Perhaps this missing ingredient is not all that ambiguous and could actually be shaped with considerate design.

The key to AI success is trust

A degree of trust is needed in every human-human as well as human-computer interaction — no surprise there. Yet, conveniently, even with AI thrown into the mix, the same “formula” applies when it comes to building trust. It’s a new field, but the recipe has already been written up.

Though this is a rather generalised take, the four main factors that contribute to earning trust in another person or entity are:

- competence,

- benevolence and openness,

- integrity, and finally,

- charisma.

These components differ slightly from research to research, so consider this a flexible overview. How these characteristics are weighted and worded are pretty varying, and sometimes traits such as predictability or consistency are also thrown in for good measure. (Unlike in my deep-dive into empathy, we shall keep it vague here.)

In a 2020 report, Ericsson analysed how these can be translated into AI-specifics so a trustworthy experience can be designed.

Competence: “Can you do the job?”

Within an AI setting, trust is perceived as whether the system is capable of fulfilling the user’s needs. It needs to be able to:

- explain how it makes decisions in an easily understandable way,

- use its AI capabilities for solving real problems efficiently,

- give users the option to test the system in a safe and controllable environment, and

- show evidence that the system has improved the outcome.

Trust is fractured from the get-go if it cannot deliver on these ingrained promises.

Benevolence and openness: “Are you on my side?”

Designing a system that makes decisions with the user’s best interests in “mind” taps into this trait. It should also be flexible and accepting of changes on the fly — forgiving, even.

For this, users should be able to intervene, undo or dismiss actions taken by the AI and easily communicate their preferences. At the same time, the system must adapt to adjustments by taking implicit and explicit user feedback onboard.

Integrity: “How ethical are you?”

If the user feels the system is honest and transparent (better yet, ethical), you are on right track. It is important to clearly communicate the system’s capabilities and limitations so that expectations are curbed, and the AI can deliver on set promises.

Data collection and handling also need to be highlighted — including required permissions and security measures to protect user data, so consumers feel safe.

Charisma: “Do I like you?”

Does your AI system possess charm, visual appeal, and a suitable tone of voice? Usability should be coupled with attractiveness in aesthetics, such as having an understandable and organised look.

It is also important to check that the copywriting and voice interactions reflect:

- your intended message,

- the desired system personality,

- and the characteristics of the targeted user group.

These all must align to be appropriate and charismatic for the given product.

Now that we have distilled trust and gone through its applications in AI, let’s look at best practices with examples.

18 research-based usability guidelines

As tech keeps evolving, ideas around integrating AI into more products will be gradually shaped and refined — but there are already some common principles we can follow. In collaboration with Aether and Office, Microsoft compiled a list of best practices in 2019 to serve as an aid during the design process.

Aiming to help “evaluate existing ideas, brainstorm new ones, and collaborate with the multiple disciplines involved in creating AI,” they summarised and combed through relevant papers from the past 20 years.

The study goes into much more detail and with additional examples than the condensed version below, so if you are keen on diving deeper, you can find the paper here. Otherwise, keep reading for a quick summary of each stage and its applicable guidelines.

⮞ Initial / introductory phase

1. Help the user understand what the AI system is capable of doing to clear up any confusion.

Case in point: activity trackers that display movement metrics such as steps, calories burned, and exercise length (broken down by day) also tend to explain how these are measured. Follow this pattern of laying out key information in an accessible way, so users don’t feel lost from the beginning.

2. To set expectations, clarify how well the system performs at tasks, and how often it may make mistakes.

One common example can be seen in music recommendations being made with muted or hedging language such as Spotify’s “songs we think you’ll love” for their Discover Weekly playlists. Naturally, it’s even better if you can give them a clear rule of thumb, but tinkering with wordings can also shape and adjust expectations.

⮞ During interactions

3. Time when to act or interrupt based on the user’s current task and environment.

Autocomplete suggestions and satnavs adapting on the go are ways of implementing this idea into practice. It can also be seen in products that give the user a heads-up when they haven’t interacted with it for long and may have missed a few new features.

4. Display relevant information, based on the user’s current activities.

Search engines pulling up show times near the user’s location when they look up a movie title contribute to a smoother experience by being proactive. Switching to a different context, e-commerce platforms recommending products that are related to the items in the customer’s basket is also a nod towards this principle.

5. Pay attention to conventions and provide the experience in a way that users would expect.

One of the most popular uses of artificial intelligence is in the form of voice assistants. Statista states these are likely to reach 8.4 billion devices by 2024 — higher than the world’s population.

Tailoring interactions can be as simple as voice assistants using semi-formal language when talking to users and asking further questions where needed.

For a more “physical” case, think about routes being selected to fit the user’s preference of getting to their destination — such as the system taking them through trails instead of busy roads when opting for a walk.

6. Ensure the AI system’s language and behaviours do not reinforce social stereotypes and biases.

Inching back into the world of e-commerce, consider algorithms serving related, yet gender-biased products when someone searches for diapers or tools. Common, but not ideal. Buying baby products shouldn’t automatically infer that you want to buy a hot pink dress for yourself up next or that using a hammer means you might also need a beard moisturiser.

⮞ When something goes wrong

7. Make it easy to request the AI system’s help and services when needed.

Wake commands are generally simple and easy to recall, allowing for a quick access to the AI. The more convenient it is to reach out to the system, the better. That’s what it’s there for, after all!

8. Dismissing or ignoring unwanted AI services should be as simple as possible.

Hiding recommendations and ads shouldn’t require much effort, either — and the same goes for getting rid of eager AI assistants.

9. Editing, refining, or recovering when there is a system hiccup should also be efficient and breezy.

This one is self-explanatory: allow users to backtrack out of system assumptions. Even if certain AI-fuelled corrections are sensible, they can still be wrong, and overriding them shouldn’t be difficult.

10. When the AI system isn’t sure what a user wants, it should either clear up any confusion or reduce the services it offers.

Voice assistants have a habit of speaking up when they can’t hear what users are saying. This reduces error on their behalf and prevents annoyances from popping up down the line.

Autocomplete features offering up multiple suggestions at a time is also a way to reduce mistakes, allowing the user to choose from a selected line-up rather than feeding them a single option.

11. Explain why the system worked the way it did.

The user should have a way of linking recommendations to previous input or interactions (think movie or purchase suggestions). Routes on navigation apps also have a choice of the fastest or most convenient options, empowering the person on the other side of the screen to filter on their preferred way forward. Clarify how the system came to the decision it made to reinforce credibility.

⮞ Over time

12. Remember recent interactions, allowing to user to refer back to them more easily.

Showing recent destinations, searches and other inputs can be a helpful nudge for easing cognitive load. It can also serve as a comfortable aid, so users don’t have to enter the same data over and over again, for example in food diaries.

13. Learn from user behaviour and personalise experiences according to their actions.

Tapping into previous searches and interactions, these can also lead to highly tailored ads showing up on your screen, even if you don’t actively go about clicking on them.

Email services checking in with you if you’d like to unsubscribe from newsletters or promotions that have remained unopened and gathering proverbial dust is another welcome aspect focusing on personalisation.

14. When introducing changes or updates to the AI system’s behaviour, try to avoid making any that could be disruptive.

Entire recommendation lists being altered by a single search or action is inconvenient at best. Allow search data to shape the system gradually, so one rogue decision or purchase doesn’t upend and erase all previous behaviours.

15. Encourage detailed feedback. Make it possible for the AI system’s users to express their preferences through regular interactions.

Providing like and dislike buttons or flagging unwelcome ads and suggestions is another expected and helpful component to optimise user experience. Even if the AI gets something wrong, at least users can nudge it towards their preferences.

16. Inform the user as soon as possible about the effects their activities will have on the AI system’s future behaviour.

As soon as someone taps on that dislike button, the system should provide feedback and confirm they will see less of the kind. The same goes for all user actions — any changes and adjustments need to be communicated, so they are not left in the dark.

17. Allow the user to customise what the AI system monitors and how it behaves by providing global controls.

Turning on location history can help create a montage of images and activities relevant to each place visited, for example, but users might not be keen on sharing their whereabouts. Try to ensure default options are not invasive, but can still be turned on for certain features.

18. Notify users when you make changes to the system’s updates and capabilities.

Updates to privacy and regulations, new product additions, and tooltips to point out features all boost transparency, providing guidance to put the user’s mind at ease.

I, Robot?

AI is already around, and it’s here to stay. Despite the advancements in the field, we are still working with a form of AI known as Artificial Narrow Intelligence (ANI) or “weak” AI.

The above design principles are all catering to this category, but eventually we will also have to tailor them to General AI (AGI, the next step) and then Super AI (ASI — yes, it does sound like a superhero).

For now, sit back, relax, and familiarise yourself with our current ANI limitations. Before you have the chance to blink twice, chances are these will have evolved, too.

Thanks for reading! ⭐

If you liked this post, follow me on Medium for more!

References & Credits:

- IBM Global AI Adoption Index 2022

- UX design in AI: A trustworthy face for the AI brain by Ericsson

- Amershi, S., Weld, D., Vorvoreanu, M., Fourney, A., Nushi, B., Collisson, P., … & Horvitz, E. (2019, May). Guidelines for human-AI interaction. In Proceedings of the 2019 chi conference on human factors in computing systems (pp. 1–13).

- Dietz, G., & Den Hartog, D. N. (2006). Measuring trust inside organisations. Personnel review.

- Zimmerman, J., Oh, C., Yildirim, N., Kass, A., Tung, T., & Forlizzi, J. (2020). UX designers pushing AI in the enterprise: a case for adaptive UIs. Interactions, 28(1), 72–77.

- Images by vectorjuice on Freepik