My first task-based usability test

Well, the first one completed at work anyway.

The developers had finished creating a new feature for our software, which was deployed onto the main user product. However, user testing had not been completed for this new feature and there was a mixture of confusion and frustration in the air. I asked my mentor to have some time to run a quick task-based usability test of the new feature internally. Given the go-ahead I started!

First of all, I needed to recap and understand what a task-based usability test was and what I wanted to have learnt by the end. I did a quick google search:

What is it?

• One method of usability testing — engage users earlier.

• Understand what works and doesn’t.

• Observe users on platform — exploratory.

• Not all real life scenarios are tested.

• Can be a prescriptive form of testing — potentially misses open-ended investigation.

I already knew which new feature I was testing, it was a matter of needing to sit down and figure out what sort of tasks could help me test the usability of the feature.

So, how did I prepare?

• Created one scenario — very common scenario performed by internal users of the software on a regular basis.

• Small 4 tasks/steps to achieve the scenario and test usability and interaction.

• Printed each task on separate paper to be handed to the user on the test day — for example, A. Please go into a privacy setting and toggle into public view.

• Participants were gathered internally from the client service team who use the platform on a daily basis and handle customer queries — total of 5 recruited.

How did I conduct the test on the day?

• Setup the platform ready for participants, and found a quiet place to sit in with minimal disruption in an open office floor plan — this was hard to do in the afternoon, mornings were the best time.

• Before they started I briefed each individual about how the test will run — once happy the printed out tasks were given to them to go through at their own pace.

• Participants were given my laptop to complete the test.

• Observed individuals complete tasks identifying problem areas.

• Successful participants — If a participant completed a task without aid from me (the instructor), I would mark that task down as successful.

• Struggling participants —As a last resort I only hinted or aided if they asked for help or if they had been lingering on that particular task for some time, for example, “Try this area here”, “It is similar to how you would do [another task]”. This was particularly the case if the current task was linked to the next task. Although, I preferred to sit back and see how the participants handled tasks before stepping in to help; this opened up the floor to see what alternative ways/hacks users used to solve their problem, seeing first hand how they “shouldn’t” be using the platform vs how they “should”.

• Failing participants — If a participant cannot complete a task without aid from me (the instructor), I would mark that task down as failed.

• Record user actions, frustrations and comments while they perform the tasks — turned out to be harder than first thought, you have to write fast and keep up with your users. Here I found making notes in short-form very efficient and handy.

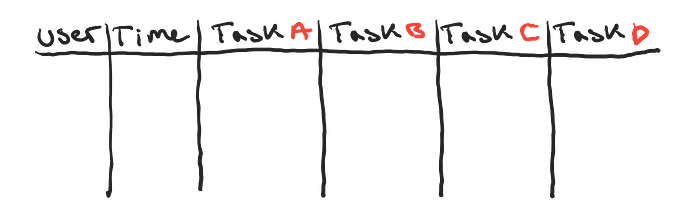

Since I was a one woman army and had no resources to undergo this task, I used the old trusted and well known companion — pen and paper! To order my notes and make them readable to others, I later transferred my notes onto an excel sheet.

What about the results?

• Organised notes into following columns in excel— User, Time, Task A, Task B, Task C and Task D.

• Measure of success by completion of task — the tasks could be completed using whatever means of interaction with the software, no specific method was correct, as long as the user completed the task.

• Analysis of results — I identified the recurring issues and the not so common issues which users came face-to-face with and provided recommendations to consider.

• Common issues — these include problems such as not being aware if an action had been committed (no user feedback — user was waiting for something to happen), unable to locate information (information hidden from view).

Now I needed to produce something to help visualise some of the recommendations I had suggested and something to present this task-based usability test internally. This would help provide an overview of the whole process I had undergone and a look into what some of the real problems users had.

What deliverables did I produce?

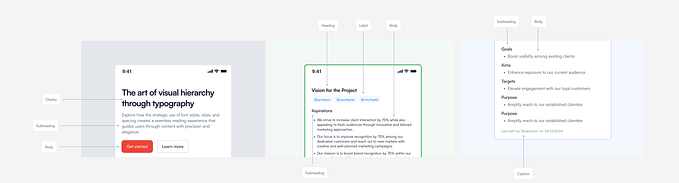

• Low-fidelity prototype — supplemented recommendations using various screenshots of the platform and puzzled together acceptable ideas of what could potentially work. Although looking back at this I produced too many low-fi prototypes for 1 recommendation, too many options made it harder to come to a conclusion as to what would work better.

• Presentation — placed everything I have spoken about in this article into 8 slides!

Overall, the main reasons why I adopted this form of usability testing was many and include:

• Insight into what’s causing users trouble.

• Determine how to improve the design.

• Participants buy-in features on the platform.

• Communicate features to team.

• Less time spent developing problem features.

• Usable sells product — selling products increases volume of trade.

Finally 😅 — the feeling you get when you have successfully completed a usability test with insight into how a feature can be changed for the better!

[All drawings are created by myself and are original, unless otherwise mentioned.]